Difference between revisions of "Towards a Responsible AI Framework for the Design of Automated Decision Systems in DFO: a Case Study of Bias Detection and Mitigation"

| Line 1: | Line 1: | ||

[[File:CaseStudyofResponsibleAIatDFO.jpg|alt=|center|frameless|996x996px]] | [[File:CaseStudyofResponsibleAIatDFO.jpg|alt=|center|frameless|996x996px]] | ||

| − | == Executive Summary == | + | == [[Executive Summary]] == |

Automated Decision Systems (ADS) are computer systems that automate, or assist in automating, part or all of an administrative decision-making process. Currently, automated decision systems driven by Machine Learning (ML) algorithms are being used by the Government of Canada to improve service delivery. The use of such systems can have benefits and risks for federal institutions, including the Department of Fisheries and Oceans Canada (DFO). There is a need to ensure that automated decision systems are deployed in a manner that reduces risks to Canadians and federal institutions and leads to more efficient, accurate, consistent, and interpretable decisions. | Automated Decision Systems (ADS) are computer systems that automate, or assist in automating, part or all of an administrative decision-making process. Currently, automated decision systems driven by Machine Learning (ML) algorithms are being used by the Government of Canada to improve service delivery. The use of such systems can have benefits and risks for federal institutions, including the Department of Fisheries and Oceans Canada (DFO). There is a need to ensure that automated decision systems are deployed in a manner that reduces risks to Canadians and federal institutions and leads to more efficient, accurate, consistent, and interpretable decisions. | ||

| Line 14: | Line 14: | ||

Eventually, in the long term, the goal will be to develop a comprehensive responsible AI framework to ensure responsible use of AI within DFO and to ensure compliance with Treasury’s Board directive on automated decision making. | Eventually, in the long term, the goal will be to develop a comprehensive responsible AI framework to ensure responsible use of AI within DFO and to ensure compliance with Treasury’s Board directive on automated decision making. | ||

| − | == Introduction == | + | == [[Introduction]] == |

Unlike traditional Automated Decision Systems (ADS), Machine Learning (ML)-based ADS do not follow explicit rules authored by humans. ML models are not inherently objective. Data scientists train models by feeding them a data set of training examples, and the human involvement in the provision and curation of this data can make a model's predictions susceptible to bias. Due to this, applications of ML-based ADS have far-reaching implications for society. These range from new questions about the legal responsibility for mistakes committed by these systems to retraining for workers displaced by these technologies. There is a need for a framework to ensure that accountable and transparent decisions are made, supporting ethical practices. | Unlike traditional Automated Decision Systems (ADS), Machine Learning (ML)-based ADS do not follow explicit rules authored by humans. ML models are not inherently objective. Data scientists train models by feeding them a data set of training examples, and the human involvement in the provision and curation of this data can make a model's predictions susceptible to bias. Due to this, applications of ML-based ADS have far-reaching implications for society. These range from new questions about the legal responsibility for mistakes committed by these systems to retraining for workers displaced by these technologies. There is a need for a framework to ensure that accountable and transparent decisions are made, supporting ethical practices. | ||

Revision as of 16:36, 3 December 2021

Executive Summary

Automated Decision Systems (ADS) are computer systems that automate, or assist in automating, part or all of an administrative decision-making process. Currently, automated decision systems driven by Machine Learning (ML) algorithms are being used by the Government of Canada to improve service delivery. The use of such systems can have benefits and risks for federal institutions, including the Department of Fisheries and Oceans Canada (DFO). There is a need to ensure that automated decision systems are deployed in a manner that reduces risks to Canadians and federal institutions and leads to more efficient, accurate, consistent, and interpretable decisions.

For the Fiscal Year (2020 - 2021), the Office of Chief Data Officer (OCDO), and Information Management and Technical Services (IMTS) co-sponsored an Artificial Intelligence (AI) pilot project to determine the extent to which AI, coupled with other data analytics techniques, can be used to gain insights, to automate tasks, and to optimize outcomes for different priority areas and business objectives at DFO. The project was funded through the 2020-21 Results Fund and was developed in partnership with programs and business areas within DFO. The results included the development of three proof of concepts:

- Predictive models for detecting vessels’ fishing behavior, to fight noncompliance with fishing regulations.

- A predictive model for the identification and classification of endangered North Atlantic Right Whale upcalls, to minimize vessels’ strike to endangered whales.

- A predictive model for clustering ocean data, to advance ocean science.

Supported by the (2021 – 2022) Results Fund, the OCDO and IMTS are prototyping automated decision systems, based on the outcome of the AI pilot project. The effort includes defining an internal process to detect and mitigate bias, as a potential risk of ML-based automated decision systems. A case study is designed to apply this process to assess and mitigate bias in the predictive model for detecting vessels’ fishing behavior.

Eventually, in the long term, the goal will be to develop a comprehensive responsible AI framework to ensure responsible use of AI within DFO and to ensure compliance with Treasury’s Board directive on automated decision making.

Introduction

Unlike traditional Automated Decision Systems (ADS), Machine Learning (ML)-based ADS do not follow explicit rules authored by humans. ML models are not inherently objective. Data scientists train models by feeding them a data set of training examples, and the human involvement in the provision and curation of this data can make a model's predictions susceptible to bias. Due to this, applications of ML-based ADS have far-reaching implications for society. These range from new questions about the legal responsibility for mistakes committed by these systems to retraining for workers displaced by these technologies. There is a need for a framework to ensure that accountable and transparent decisions are made, supporting ethical practices.

Responsible AI

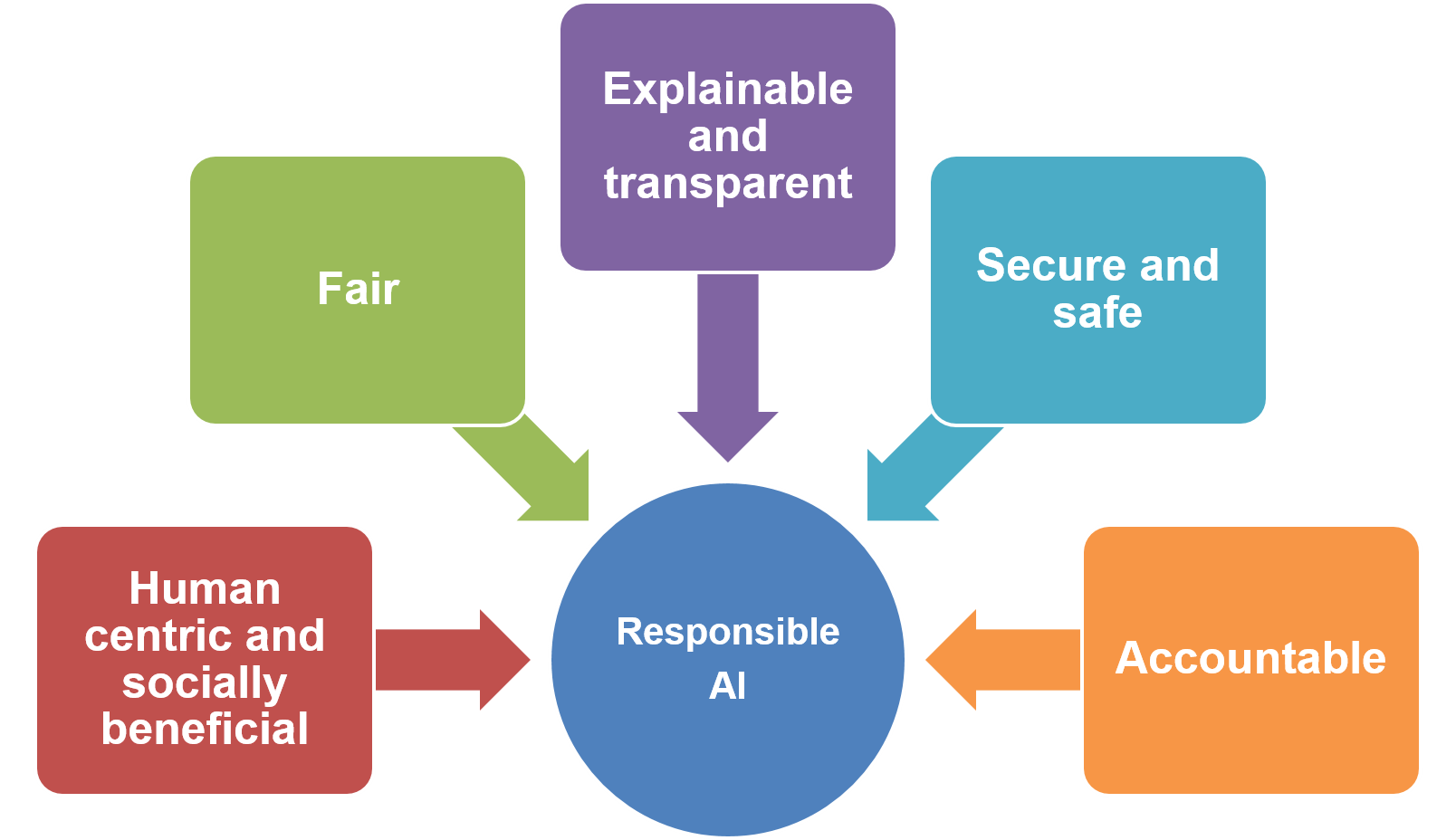

Responsible AI is a governance framework that documents how a specific organization is addressing the challenges around artificial intelligence (AI) from both an ethical and legal point of view. In an attempt to ensure Responsible AI practices, organizations have identified guiding principles to guide the development of AI applications and solutions. According to the research “The global landscape of AI ethics guidelines” [1], some principles are mentioned more often than others. However, Gartner has concluded that there is a global convergence emerging around five ethical principles:

The definition of the various guiding principles is included in [1].